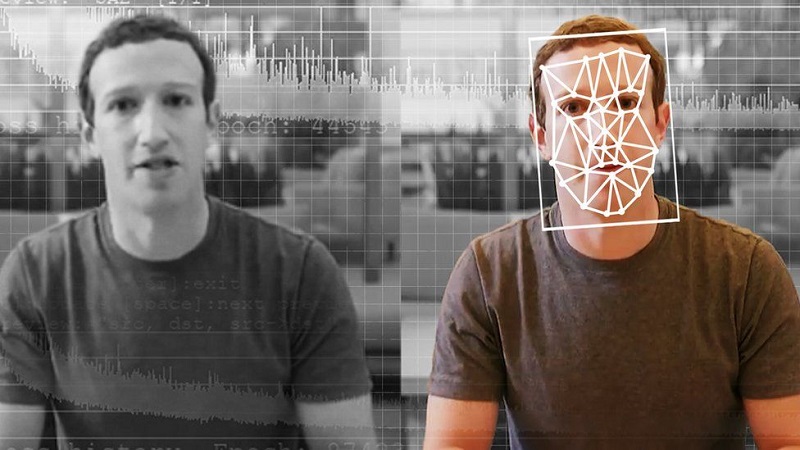

Microsoft has developed a tool for spotting deepfakes and computer-manipulated images in which one person’s likeness has been used to replace with that of another. The software analyses photos and videos to give a confidence score about whether the material is likely to have been artificially created.

Deepfakes came to prominence in early 2018 after a developer adapted cutting-edge artificial intelligence techniques to create software that swapped one person’s face for another. The process worked by stuffing a computer with still images of one person and video footage of another. Software then used this to generate a new video featuring the former’s face in the place of the latter’s, with matching expressions, lip-synch and other movements.

The firm applied its own machine-learning techniques to a public dataset of about 1,000 deepfaked video sequences and then tested the resulting model against an even bigger face-swap database created by Facebook. Photo and video manipulation is crucial to the spread of often quite convincing disinformation on social media.

Computer-generated photos of people’s faces, on the other hand, have already become common hallmarks of sophisticated foreign interference campaigns, used to make fake accounts appear more authentic. This tool can be used effectively to fight against online disinformation.

Post Your Comments